- Introduction and Application Architecture

- Setting up the React Application

- Setting up the Spring WebApplication

- Setting up the Python Application

- Container-ization of the Services

- Container-ization of everything else

- Introduction to Kubernetes

- Kubernetes in Practice – Pods

- Kubernetes in Practice – Services

- Kubernetes in Practice – Deployments

- Kubernetes and everything else in Practice

- Kubernetes Volumes – in Practice

I promise and I am not exaggerating that by the end of the article you will ask yourself “Why don’t we call it Supernetes?”.

If you followed this series from the beginning we covered so much ground, so much knowledge, and you might worry that this will be the hardest part, but, it is the simplest. The only reason why learning Kubernetes is daunting is because of the “everything else” and we covered that one so well.

What is Kubernetes

At the end of the article Container-ization of everything else we had one question, let’s elaborate it in a Q&A format:

Q: How do we scale containers?

A: We spin up another one.

Q: How do we share the load between them? What if the Server is already used to the maximum and we need another server for our container? How do we calculate best hardware utilization?

A: Ahm… Ermm… (Let me google).

Q: Rolling out updates without breaking anything? And if we do, how can we go back to the working version.

Kubernetes solves all these questions (and more!) my attempt to reduce Kubernetes in one sentence would be: “Kubernetes is a Container Orchestrator, that abstracts the underlying infrastructure (where the containers are run)”.

We have a faint idea about Container Orchestration and we will cover that in upcoming articles, but it’s the first time we are reading about “abstracts the underlying infrastructure”, so let’s take a close-up shot, to this one.

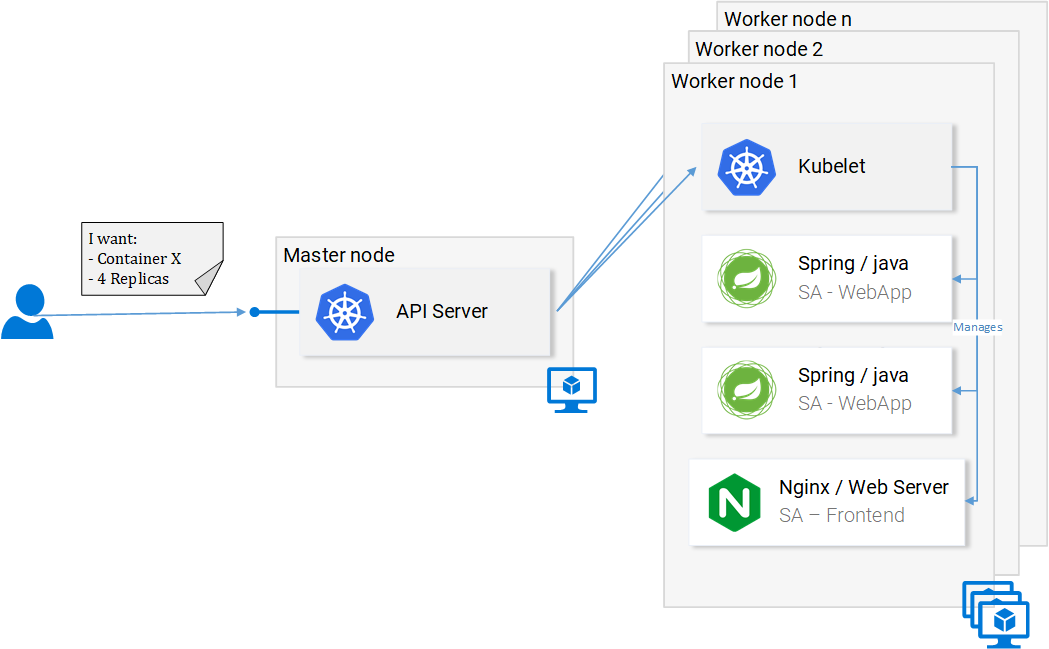

Kubernetes abstracts the underlying infrastructure by providing us with a simple API at which we can send requests. Those requests prompt Kubernetes to meet them at its best of capabilities. For example, it is as simple as requesting “Kubernetes spin up 4 containers of the image x”, and then Kubernetes will find under-utilized nodes in which it will spin up the new containers (see Fig. 1.).

What does this mean for the developer? That he doesn’t have to care about the number of nodes, where containers are started and how they communicate, he doesn’t deal with hardware optimization or worry about nodes going down (and they will go down Murphy’s Law), because new nodes can be added to the Kubernetes cluster and in the meantime Kubernetes will spin up the containers in the other nodes that are still running, and it does this at the best possible capabilities.

In figure 1 we can see a couple of new things:

- API Server: Our only way to interact with the Cluster. Be it starting or stopping another container (err *pods) or checking current state, logs, etc.

- Kubelet: monitors the containers (err *pods) inside a node and communicates with the master node.

- *Pods: Initially just think of pods as containers.

And we will stop here, as diving deeper will just loosen our focus and we can always do that later, there are useful resources to learn from, like the official documentation (the hard way) or reading the amazing book Kubernetes in Action, by Marko Lukša.

Standardizing the Cloud Service Providers

Another strong point that Kubernetes drives home, is that it standardizes the Cloud Service Providers (CSPs). This is a bold statement, but let’s elaborate with an example:

– An expert in Azure, Google Cloud Platform or some other CSP ends up working on a project in an entirely new CSP, and he has no experience working with it. This can have many consequences, to name a few: he can miss the deadline; the company might need to hire more resources, and so on.

In contrary, with Kubernetes this isn’t a problem at all. Because you would be executing the same commands to the API Server no matter what CSP. You on a declarative manner request the API Server what you want and Kubernetes abstracts away and implements the how for the CSP in question.

Give it a second to sink in — this is extremely powerful feature, for the company it means that they are not tied up to a CSP, they calculate their expenses on another CSP, and they move on, because they still will have the expertise, they still will have the resources, and they can do that for cheaper!

Summarization

Kubernetes is beneficial for the team, for the project, simplifies deployments, scalability, resilience, it enables us to consume any underlying infrastructure and you know what? From now on, let’s call it Supernetes!

All that sai, but we still didn’t see Kubernetes in practice. That will be our next article. Stay tuned!

If you enjoyed the article, please share and comment below! I promise and I am not exaggerating that by the end of the article you will ask yourself “Why don’t we call it Supernetes?”.

I promise and I am not exaggerating that by the end of the article you will ask yourself “Why don’t we call it Supernetes?”.